Probability theory¶

Probability theory is the mathematical foundation for statistics and is covered in chapter 3 of our text. In this portion of our outline, we'll look briefly at the beginning of that chapter.

What is probability?¶

- A random event is an event where we know which possible outcomes can occur but we don't know which specific outcome will occur.

- The set of all the possible outcomes is called the sample space.

- If we observe a large number of independent repetitions of a random event, then the proportion $\hat{p}$ of occurrences with a particular outcome converges to the probability $p$ of that outcome.

That last bullet point is called the Law of Large Numbers and is a pillar of probability theory.

The Law of Large Numbers¶

The Law of Large Numbers can be written symbolically as

$$P(A) \approx \frac{\# \text{ of occurrences of } A}{\# \text{ of trials}}.$$More importantly, the error in the approximation approaches zero as the number of trials approaches $\infty$.

Again, this is a symbolic representation where:

- The symbol $A$ represents a set of possible outcome contained in the sample space. Such a set is often called an event.

- The symbol $P$ represents a function that computes probability.

- Thus, $P(A)$ tells us the probability that the event $A$ occurs.

Visualizing LLN¶

In the vizualization below, $n$ represents the number of experiments, $p$ represents the probability of success and the graph shows the proportion of successes in the first $k$ experiments. As $n$ increases, that proportion should converge to $p$.

The role of Independence¶

It's important to understand that observations made in the Law of Large numbers are independent - the separate occurrences have nothing to do with one another and prior events cannot influence future events.

Example questions¶

- I flip a fair coin 5 times and it happens to come up heads each time. What is the probability that I get a tail on the next flip?

- I flip a fair coin 10 times and it happens to come up heads each time. About how many heads and how many tails do I expect to get in my next 10 flips?

Computing probabilities¶

We now turn to the question of how to compute the probabily of certain types of events, which is not so bad, once you understand a few formulae and basic principles.

The serious, mathematical study of probability theory has its origins in the $17^{\text{th}}$ century in the writings of Blaise Pascal who studied - gambling. Many elmentary examples in probability theory are still commonly phrased in terms of gambling. As a result, it's long standing tradition to use coin flips, dice rolls and playing cards to illustrate the basic principles of probability. Thus, it does help to have a basic familiarity with these things.

Cards¶

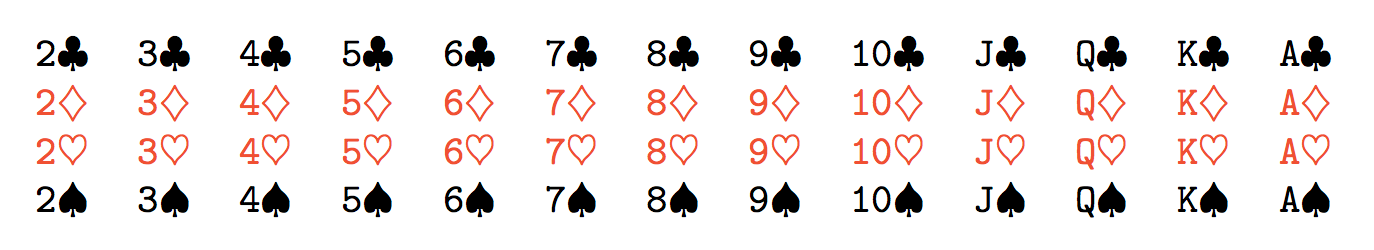

A standard deck of playing cards consists of 52 cards divided into 13 ranks each of which is further divided into 4 suits

- 13 ranks: A,2,3,4,5,6,7,8,9,10,J,K,Q

- 4 suits: hearts, diamonds, clubs, spades

When we speak of a "well shuffled deck" we mean that, when we draw one card, each card is equally likely to be drawn. Similarly, when we speak of a fair coin or a fair die, we mean each outcome produced (heads/tails or 1-6) is equally likely to occur.

That brings us our first formula for computing probabilities!

Equal likelihoods¶

When when the sample space is a finite set and all the individual outcomes are all equally likely, then the probability of an event $A$ can be computed by

$$P(A) = \frac{\# \text{ of possibilities in } A}{\text{total } \# \text{ of possibilities}}.$$Examples¶

Suppose we draw a card from a well shuffled standard deck of 52 playing cards.

- What's the probability that we draw the four of hearts?

- What's the probability that we draw a four?

- What's the probability that we draw a club?

- What's the probability that we draw a red king?

Mutually exclusive events¶

The events $A$ and $B$ are called mutually exclusive if only one of them can occur. Another term for this same concept is disjoint. If we know the probability that $A$ occurs and we know the probability that $B$ occurs, then we can compute the probability that $A$ or $B$ occurs by simply adding the probabilities. Symbolically,

$$P(A \text{ or } B) = P(A) + P(B).$$I emphasize, this formula is only valid under the assumption of disjointness.

Examples¶

We again draw a card from a well shuffled standard deck of 52 playing cards.

- What is the probability that we get an odd numbered playing card?

- What is the probability that we get a face card?

- What is the probability that we get an odd numbered playing card or get a face card?

A more general addition rule¶

If two events are not mutually exclusive, we have to account for the possibility that both occur. Symbolically:

$$P(A \text{ or } B) = P(A) + P(B) - P(A \text{ and } B).$$Examples¶

Yet again, we draw a card from a well shuffled standard deck of 52 playing cards.

- What is the probability that we get a face card or we get a red card?

- What is the probability that we get an odd numbered card or we get a club?

Computation¶

Now, things are getting a little tougher so let's include the computation for that second one right here. Let's define our symbols and say

\begin{align*} A &= \text{we draw an odd numbered card} \\ B &= \text{we draw a club}. \end{align*}In a standard deck, there are four odd numbers we can draw: 3, 5, 7, and 9; and four of each of those for a total of 16 odd numbered cards. Thus, $$P(A) = \frac{16}{52} = \frac{4}{13}.$$ There are also 13 clubs so that $$P(B) = \frac{13}{52} = \frac{1}{4}.$$

Now, there are four odd numbered cards that are clubs - the 3 of clubs, the 5 of clubs, the 7 of clubs, and the 9 of clubs. Thus, the probability of drawing an odd numbered card that is also a club is $$P(A \text{ and } B) = \frac{4}{52} = \frac{1}{13}.$$ Putting this all together, we get $$P(A \text{ or } B) = \frac{4}{13} + \frac{1}{4} - \frac{1}{13} = \frac{25}{52}.$$

Independent events¶

When two events are independent, we can compute the probability that both occur by multiplying their probabilities. Symbolically, $$P(A \text{ and } B) = P(A)P(B).$$

Examples¶

Suppose I flip a fair coin twice.

- What's the probability that I get 2 heads?

- What's the probability that I get a head and a tail?

- Now suppose I flip the coin 4 times. What's the probability that I get 4 heads?

Conditional probability¶

When two events $A$ and $B$ are not independent, we can use a generalized form of the multiplication rule to compute the probability that both occur, namely $$P(A \text{ and } B) = P(A)P(B|A).$$ That last term, $P(B|A)$, denotes the conditional probability that $B$ occurs under the assumption that $A$ occurs.

Example¶

We draw two cards from a well-shuffled deck. What is the probability that both are hearts?

Example (continued)¶

We draw two cards from a well-shuffled deck. What is the probability that both are hearts?

Computation¶

The probability of that the first is a heart is $13/52=1/4$. The probability that the second card is a heart is different because we now have a deck with 51 cards and 12 hearts. Thus, the probability of getting a second heart is $12/51 = 4/17$. Thus, the answer to the question is $$\frac{1}{4}\times\frac{4}{17} = \frac{1}{17}.$$ To write this symbolically, we might let

- $A$ denote the event that the first draw is a heart and

- $B$ denote the event that the second draw is a heart.

Then, we express the above as $$P(A \text{ and } B) = P(A)P(B\,|\,A) = \frac{1}{4}\times\frac{4}{17} = \frac{1}{17}.$$

Turning the computation around¶

Sometimes, it is the computation of conditional probability itself that we are interested in. Thus, we might rewrite our general multiplication rule in the form $$P(B\,|\,A) = \frac{P(A \text{ and } B)}{P(A)}.$$

Example¶

What is the probability that a card is a heart, given that it's red?

Data¶

In statistics, we'd often like to compute probabilities from data. For example, here's a contingency table based on our CDC data relating smoking and exercise:

| exer/smoke | 0 | 1 | All |

|---|---|---|---|

| 0 | 0.12715 | 0.12715 | 0.2543 |

| 1 | 0.40080 | 0.34490 | 0.7457 |

| All | 0.52795 | 0.47205 | 1.0000 |

From here, it's pretty easy to read off basic probabilities or compute conditional probabilities.

Examples¶

- What is the probability that a someone from this sample exercises?

- What is the probability that someone from this sample exercises and smokes?

- What is the probability that someone smokes, given that they exercise?

Answers¶

- The probability that a someone exercises is $0.7457$.

- The probability that a someone exercises and smokes is $0.34490$.

The probability that a someone smokes, given that the exercise is $0.34490$

$$P(\text{smoke}|\text{exercise}) = \frac{P(\text{smokes} \cap \text{exercises})}{P(\text{exercises})} = \frac{0.3449}{0.7457} = 0.462518.$$